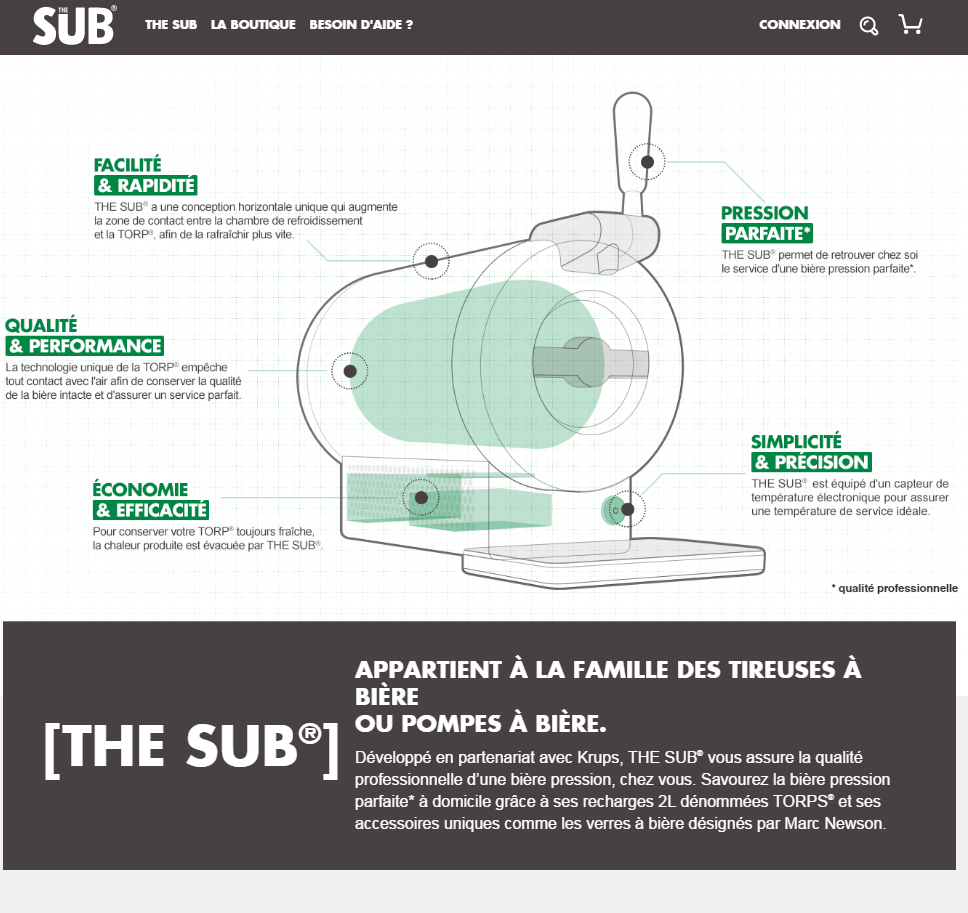

After our tech presentation app for Heineken’s The Sub® used as part of interactive elements in a popup store in Paris, running on ipad Air 2s, we were asked to build a browser version which would now be accessible to the public, as part of The Sub®’s web site.

The color scheme would change, yet all functionality would be kept.

Now, Unity does export for webgl yet the bulky webgl build and unexpected results we had when first trying the webgl export out, led us to decide on using Three.js and building it back from scratch. It would be lighter and we’d have a bit more finer control over the result.

We would be writing this in Haxe. No specific reason to this, except for a cleaner code… and to code faster… even though we would not be targeting another language.

The Model

The model used for the mobile version of this app was way too complex. It still could be considered too high res in fact but we had to have good quality results even if that meant keeping out a few browsers out. This app would only run on webgl capable browsers and devices, inside a div with an entire website around it. others would get an image of The Sub® with all key elements of it described.

To export it from FBX to Three.JS, the first idea was to use the scaleform FBX SDK along with an export script in python. This worked perfectly. Yet… How about all additional unity objects in the orginal unity scenes?

For the touch icons to disappear once behind the object when we rotate around it, a set of box , capsule and cylinder colliders were placed on the object so that we would continuously check wether the object was blocking a view and switch the icon from alpha 0.5 to alpha 1 if they were visible or not. Because we’d need the same functionality, the same set of colliders ideally would be necessary in order to not raycast on the full complex object but just simple ones and not waste cpu time.

Additionally sphere where placed to validate taps on the screen where the icons would be, a ray would be cast as well and if that sphere was “touched”, we would zoom in to the hotspot view.

Camera positions, rotation and fov were also setup in unity’s editor to match as close as possible, mockups from the artists.

Here, we would need the exact same camera positions for each hotspot. As a reminder, here’s the video from the unity version :

The Sub by Heineken from Da Viking Code on Vimeo.

So you see, many non meshed 3D objets were used to identify, place, and pinpoint locations inside and around the objects… We needed those as well and just copying all the Transform data from unity’s editor to js files would take too much time.

We stumbled upon this Three.js exporter plugin for Unity though :

https://www.assetstore.unity3d.com/en/#!/content/40550

Thankfully this exported everything we needed… The model itself too !!!

Now, sadly this lost the smooth shading of every surface, we had to use three.js’s building geometry functions to recalculate normals and so on, but the result is still great for what we needed.

Shaders

The unity version is completely rendered (except for sprite shaders) with custom shaders created with Shaderforge. But they were easy enough to rewrite for webgl. We used the online shader editor called ShaderFrog to live code and visualizing them before implementing them into three.js . This was a real time saver.

Anyway, the important shader features we needed here were outlines. The subtle fresnel effect on objects that are ‘inside’ is easy enough but for outlines, we needed a two pass shader which unfortunately couldn’t be done in three.js – at least not as far as we were aware at the time, so we hacked things a bit.

Basically the outlined objects are rendered in a different scene and twice. Once in dark grey with scaled vertices positions so everything is a bit bigger (scaled from the center. That’s the outline bit) and then drawn a second time with the right color/material parameters.

Before each draw, we just set the material parameters correctly. This way, we achieve the outline effect easily even though this is pretty performance intensive – it’s still a key aspect of the “technical drawing” look, obviously couldn’t go without it. Supposedly there are other more interesting techniques, this one was fast to implement though.

Animation

There’s a very important view where the liquid is compressed and flows out of a tube. In unity this meant animated ‘blend shapes’ and a custom shader for the tube (explained in the other article) .

The shaderlab version of the tube shader was recreated, easily, for the three.js version : we’re still using a black to white gradient with a threshold value to make the liquid appear above the threshold value and not below, this way animating this value moves the liquid up and down the tube.

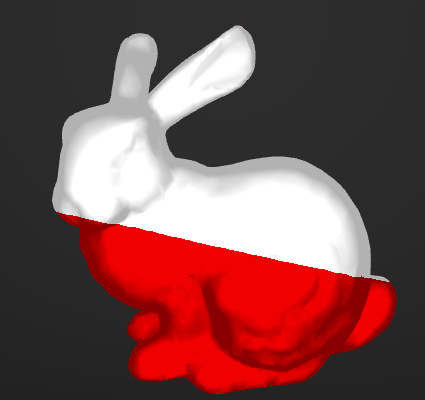

For the capsule though, blend shapes couldn’t be exported. We opted for a custom vertex shader to compress the capsule programmatically. Why not offload a bit of mesh animation to the GPU?

Everything else is just like the unity version. The fan rotates around it’s local z axis, bubbles in the glass are particles…

And almost every material is animated on zoom in or out of the camera.

Cross platform

Because this project was not expected when the first unity version was created, choices made did not lead us to have a full cross platform solution here.

Unlike other projects, we’re not sharing 99% of the code base from one project to the other.

However the truth is these two projects are more graphical than functional. The important thing was to translate data from the original scene to be used in three.js (parameters, positions, rotations, ids) and also to translate visual aspect by translating the shaders.

So when I wrote earlier that we had to do everything from scratch, in reality, because most of the required data was stored and accessible inside the Unity scene, and thanks to the Unity editor’s extendability, we were able to extract the required info and re-use it. Leaving us to code the little remaining js specific aspects of the app.

We can go further even, and conclude that building 3D scenes for apps in unity editor for three.js or any other framework, because unity editor is extendable, is a viable solution to in fact be close to a cross platform solution between native to browser.

One thought on “Heineken, The Sub® in WebGL”